Here’s something most AI projects have in common: they don’t fail because the model was wrong. They failed because the data feeding it was a mess nobody caught in time.

That’s a hard lesson, and a lot of enterprises are learning it right now, after months of investment and expectation-setting, they’re staring at models that underperform, predictions nobody trusts, and engineers stuck debugging data pipelines instead of doing anything useful.

The problem isn’t AI. The problem is that most organizations weren’t built to feed AI. Their data lives in places it shouldn’t, structured in ways that made sense five years ago, maintained by teams who’ve never spoken to anyone working on machine learning. When those worlds collide, the gap becomes very obvious very fast.

So, what does it actually take to make data AI-ready? And why does it matter enough to prioritize before anything else?

That’s what this guide covers. Whether you’re deep in an internal initiative or working with AI Consulting Services to accelerate things, the engineering fundamentals are the same. Let’s get into them.

Structuring Data Pipelines for AI Consumption: Architecture, Quality, and Scale

Most data pipelines weren’t designed with AI in mind. They were built to move data from point A to point B to power dashboards, feed reports, and support billing. And they do that fine. But AI demands something different, and the gap between “good enough for analytics” and “good enough for model training” is wider than people expect.

Take schema stability. A reporting pipeline can tolerate a new column appearing unannounced. The dashboard just picks it up. The training pipeline can’t. Unexpected schema changes corrupt feature vectors, silently, in ways that might not surface until a model has already been trained on bad data. Same with latency. Analytics tools can absorb a few hours of lag. A real-time inference pipeline cannot.

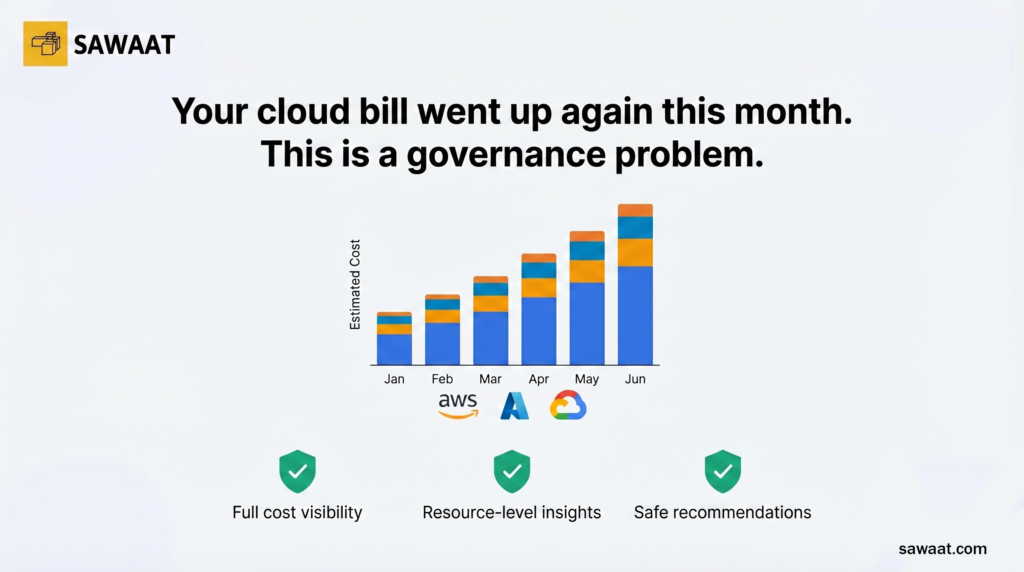

And then there’s a scale. AI workloads particularly training runs push infrastructure in ways that business intelligence never did. Teams that didn’t architect for this discover the expensive way: runaway cloud costs, jobs that take three times longer than planned, and infrastructure that can’t keep pace with what the model needs.

Getting the architecture right before you scale the AI program isn’t perfectionism. It’s the difference between building something that compounds in value versus something you’ll have to tear down and rebuild in eighteen months.

The Technical Imperative of Data Readiness in Enterprise AI Systems

Enterprise scale changes math on data quality problems. A small inconsistency in a field that sometimes stores a date as a string. A customer ID that gets formatted in two different ways across two systems might cause a tolerable hiccup in a reporting environment. That same inconsistency, multiplied across six months of training data, can produce a model that’s systematically wrong in ways that are incredibly hard to trace back to the source.

That’s not hypothetical. It happens repeatedly, and it’s one of the reasons experienced AI Strategy Consulting practitioners spend so much time on data infrastructure before touching model architecture. The model is, in a very literal sense, the least of your problems if the data underneath it isn’t solid.

There’s also a regulatory dimension that’s growing harder to ignore. Across financial services, healthcare, insurance; regulators are increasingly asking enterprises to explain AI-driven decisions. You can’t explain a decision if you can’t trace exactly what data is informed about it. That’s not a governance aspiration. At this point, for many industries, it’s a compliance requirement.

Five areas sit at the core of enterprise data readiness. Each one is worth understanding on its own terms.

Mapping Data Infrastructure to Downstream Model Requirements

One of the most common mistakes in enterprise AI builds is treating data engineering and machine learning as sequential phases. The data team does their thing, hands off a dataset, and then the ML team figures out what they actually needed. By that point, fixing it is painful.

The better approach is to design infrastructure against specific model requirements from the start. What features does the model need? In what format? How much history is? With what update frequency? Those questions should drive pipeline design, not be answered after the fact.

Feature stores, data contracts, and schema registries exist precisely to formalize this alignment. They’re not overhead; they’re what keeps the pipeline producing exactly what the model expects, every time, without someone manually verifying it before each training runs.

When this mapping is done correctly, the payoff is immediate: less debugging, less wrangling, more time spent on the work that matters.

Reducing Noise, Bias, and Variance at the Data Layer

A model trained on noisy data learns noise. A model trained on biased data encodes that bias. This isn’t a new insight it’s been true since the earliest days of applied machine learning, but it remains one of the most underinvested areas in enterprise AI programs.

Part of the reason is that data quality problems are invisible until they’re not. A training dataset can look perfectly clean with no null values, no obvious formatting issues and still contain systematic representation gaps that make the model fail on certain customer segments, geographies, or time periods. That failure only becomes visible in production, after it’s already caused damage.

Addressing this at the data layer means implementing outlier detection at ingestion, auditing datasets for sampling bias and population coverage, applying consistent normalization strategies, and validating that training distributions reflect what the model will see in the real world.

This is where Data Consulting Services often generate the most immediate impact not because the problems are exotic, but because they require systematic attention that most internal teams haven’t had the bandwidth to give them.

Embedding Auditability and Lineage into Data Workflows

If a model decides, you can’t explain; you have a problem. If you can’t even identify which version of which dataset was used to train that model, the problem is deeper than you thought.

Data lineage tracking exactly where data originated, how it was transformed, and what state it was in when it hit a training run is what makes AI systems auditable. Without it, every regulatory inquiry becomes an archaeological dig. With it, you can reconstruct the history of any model decision with a query.

The key word is embedded. Lineage and auditability need to be built into pipelines as a structural feature, not added retrospectively when someone asks for it. Retroactively instrumenting a production data pipeline for lineage is genuinely painful. Building it in from the start costs a fraction of effort.

Modern tooling metadata capture systems, transformation trackers, lineage-aware catalog integrations make this manageable at enterprise scale. There’s no good reason to skip it.

Eliminating Pipeline Redundancy and Compute Overhead

Walk through most large enterprise data environments, and you’ll find the same datasets being ingested and transformed multiple times, by multiple teams, independently. Team A built a customer feature pipeline. Team B built their own, slightly different version, because they didn’t know Team As existed, or they knew but couldn’t access it. Team C did the same thing six months later.

The computing cost of this is real. The coordination cost is worse. When three versions of “customer lifetime value” exist in three separate pipelines, each computed slightly differently; nobody can agree on which one is correct. Debugging discrepancies between them consumes engineering time that should be going toward building things.

Fixing this requires centralized orchestration, shared registries of data assets, and clear ownership of data products. It’s organizational as much as it’s technical, which is why it doesn’t get fixed without deliberate effort. But the payoff is significant: lower infrastructure costs, faster pipeline development, and data that’s actually consistent across the systems that consume it.

Standardizing Data Practices Across Teams and Systems

Ask five teams in a large enterprise how they represent a null value, and you’ll probably get four different answers. Ask how they store timestamps, and you might get five. These aren’t pedantic concerns they’re the kinds of inconsistencies that turn a joint between two datasets into a multi-week engineering project.

AI initiatives almost always require combining data from multiple domains. A churn prediction model needs product usage data, transactional data, and support history built by different teams, in different systems, using different conventions. Without standardization, assembling a coherent training dataset means translating between all of them, every time.

Enterprise-wide data standards covering field naming, null handling, timestamp formats, entity identifiers, and categorical encodings are foundational infrastructure. They don’t only serve AI. They improve the consistency of every system in the organization. But for AI specifically, they’re the difference between feature engineering and endless data wrangling.

Seven Engineering Steps to Production-Grade AI Data Infrastructure

The path from “data we have” to “data AI can use” isn’t arbitrary. It follows a consistent sequence of one that experienced AI Consulting Services teams apply across different industries, stacks, and organizational contexts. Here’s how it actually works in practice.

- Audit and Profile Your Existing Data Assets

You can’t fix what you haven’t measured. Before any remediation work starts, the priority is getting an honest picture of the current state of what data assets exist, where they live, who owns them, what they contain, and what quality characteristics they exhibit.

Data profiling tools can automate a lot of this: null rates, cardinality, value distributions, referential integrity checks. But the audit has an organizational dimension too. It surfaces ownership gaps, missing documentation, conflicting definitions of the same concepts across teams. Both dimensions matter.

The output is a data inventory that gives the engineering team something concrete to work from a vague sense that “data quality could be better,” but a specific map of exactly where the problems are and how severe they are.

- Resolve Quality Gaps, Inconsistencies, and Coverage Failures

With profiling data in hand, teams can get specific. Define quality thresholds for each data asset based on how it will be used and what the model needs, then build the cleaning and transformation logic to hit those thresholds consistently.

Coverage failures deserve particular attention here. Missing values in key features, underrepresented populations in training data, historical gaps that leave certain time periods completely uncovered these are the quality problems most likely to produce models that look fine in development and fall apart in production. They’re also the hardest to catch if you’re not specifically looking for them.

- Enforce Governance Policies at the Pipeline Level

Governance that lives in a document somewhere doesn’t govern anything. The only governance that works is governance built into the pipeline access controls, retention policies, quality rules enforced automatically as data flows through the system, not checked manually before each use.

This includes tagging sensitive data at ingestion and applying the appropriate privacy transformations at the right stage. It also includes ensuring that lineage records are generated automatically, as a byproduct of normal pipeline execution, not as a separate logging exercise someone must remember to do.

Organizations developing an AI Strategy Consulting framework should treat governance automation as a first-class engineering requirement, not a policy aspiration that gets deferred to a later phase.

- Normalize Schemas, Define Models, and Build a Centralized Catalog

Schema normalization brings data into consistent structural forms that can be reliably combined across domains. Data modeling formalizes the relationships between entities and establishes canonical representations for the concepts that matter most to the business customers, products, transactions, events.

A centralized catalog makes all this findable. Engineers building new pipelines shouldn’t have to ask around to discover what data exists, who owns it, what it means, and whether they’re allowed to use it. The catalog answers those questions without requiring anyone to track down a colleague. That alone reducing the coordination overhead of data discovery is worth a meaningful portion of the investment.

- Adopt Scalable, AI-Native Data Architecture Patterns

Traditional data warehouse architecture was built for a different era of data workloads. Structured queries against historical data, batch processing, rows, and columns. AI workloads are something else entirely large for volumes of unstructured and semi-structured data, streaming ingestion, feature computation at scale, separate but consistent datasets for training and serving.

Data lakehouses, feature stores, streaming platforms, and these aren’t new buzzwords to chase. They’re architectural responses to requirements that traditional warehouses genuinely can’t meet. The right choice depends on the specific use cases, the existing stack, and the organization’s operational maturity. But ignoring the architecture question because the current setup “mostly works” is a decision that tends to come back around.

- Build Automated Validation, Drift Detection, and Version Control into Pipelines

Data quality isn’t a checkpoint you pass once. Upstream systems change. User behavior shifts. Business processes evolve. Without continuous monitoring, those changes flow silently into model training and inference until something breaks visibly usually in production, after the damage is done.

Automated validation checks run on every pipeline execution to catch regressions before they reach models. Statistical drift detection identifies when feature distributions have shifted enough to matter. Dataset version control makes it possible to reproduce any historical training run, understand exactly what data a model was trained on, and pinpoint when and where a quality problem entered the pipeline.

None of these are complicated to build. It’s just rarely prioritized early enough.

- Establish Continuous Feedback Loops for Ongoing Data Health

Production models generate information that should flow back into the data pipeline. When predictions diverge from actual outcomes, that divergence points to something in the data. When performance degrades a particular segment, that’s a signal about training data coverage. When users flag incorrect outputs, those flags can identify specific data quality problems at a granular level.

Closing this loop connecting model monitoring back to data quality processes turns data readiness from a project into an operational discipline. It’s what separates organizations where AI compounds in value over time from those where every new model requires the same remediation to work all over again.

Engineering Principles for Scalable AI Data Preparation

Beyond the seven steps, a set of principles governs how the best engineering teams approach data preparation for AI. These aren’t theoretical; they show consistently in the organizations that get this right.

- Architect Governance Frameworks Around AI Pipeline Requirements

Governance frameworks designed for traditional data management tend to create friction in AI workflows. Review cycles and approval gates that are tolerable in a slower-moving analytics environment become blockers when data scientists need to iterate quickly. Governance for AI needs to be redesigned with AI workflows in mind: automated compliance checks, policy enforcement built into pipeline code, and fast access to properly governed data without manual sign-off at every step.

- Break Down Data Silos with Cross-Functional Engineering Collaboration

Data silos are an organizational problem that shows up as a technical one. Different teams control different data assets, build separate pipelines, and optimize their own use cases without much regard for what adjacent teams need. Solving it requires both technical solutions for shared infrastructure, common APIs, centralized catalogs and organizational ones, including joint planning, shared ownership models, and data contracts that formalize how teams expose data to each other.

In the experience of most Data Consulting Services practitioners, silo elimination is among the highest-leverage interventions available. The downstream effect on AI program velocity is hard to overstate.

- Shift Validation, Transformation, and Quality Checks to Automated Systems

Manual data quality processes don’t scale. As volumes grow and the number of pipelines increases, human review of every dataset becomes physically impossible. The engineering answer is automation validation logic in pipeline code, test suites that run on every data update, monitoring systems that catch anomalies without waiting for a human to notice something looks off.

This shift from manual to automated quality management is one of the clearest indicators of data infrastructure maturity. Organizations that achieve it can grow their AI programs without a proportional increase in data engineering headcounts.

- Build on Open Formats and Interoperable Standards to Avoid Lock-In

AI tooling is evolving fast. Proprietary formats and vendor-specific APIs that seem fine today create real switching costs when something better comes along and, in this space, something better comes along regularly. Open formats like Apache Parquet, Delta Lake, and Apache Iceberg provide the interoperability that keeps infrastructure decisions reversible.

The same logic applies to model formats, feature store interfaces, and pipeline orchestration. Standards-based implementations are easier to maintain, easier to migrate, and easier to integrate with new tooling when requirements change.

- Define Metrics, Run Benchmarks, and Refine Preparation Logic Continuously

Data readiness should be measured. Not assumed, not audited once and forgotten measured, tracked, and acted continuously. That means defining explicit metrics for each data asset: completeness rates, schema conformance, freshness relative to SLA, and feature coverage against model requirements.

Benchmarking datasets before they’re used for training prevents quality regressions from silently affecting model performance. Tracking those benchmarks over time reveals trends of assets that are degrading, pipelines that are becoming less reliable, coverage gaps that are widening. That information should feed directly back into data preparation logic, refining it as the data landscape evolves.

- Accelerate Pipeline Readiness with Dremio’s AI-Ready Data Lakehouse Platform

Assembling all the pieces described above, high-performance query execution, built-in governance, semantic layer, open table format support from separate tools and integrating them is a significant undertaking. Dremio’s Data Lakehouse Platform brings these capabilities together in a unified environment that’s built around open standards from the ground up.

For organizations that want to move from readiness assessment to production of AI infrastructure without spending a year on tooling integration, Dremio is worth a close look. The open architecture means no proprietary lock-in just infrastructure that works, at scale, with the AI stack you’re already building around.

Conclusion

AI readiness is not a destination, it’s an operational discipline that evolves alongside your data, your models, and your business. Data environments shift, models get deployed into new contexts, and requirements change faster than any one-time cleanup project can accommodate. The organizations that consistently get value from AI aren’t the ones that ran a data quality initiative years ago; they’re the ones that built the infrastructure to keep producing trustworthy, consistent data continuously.

The work compounds in a meaningful way. Every improvement to your data pipeline makes the next model faster to build, easier to validate, and more reliable once it hits production. Fixing quality at the source eliminates entire categories of downstream failure. Embedding governance into pipelines removes the manual overhead that slows iteration. Standardizing across teams turns data assembly from a weeks-long wrangling exercise into something routine. These aren’t one-off gains; they carry forward into every AI initiative that follows.

Whether your organization is tackling this internally or partnering with AI consulting services to accelerate the process, the fundamentals remain the same. Start with an audit that tells you the actual state of your data, not the assumed state. Resolve quality problems where they originate rather than patching them downstream. Build lineage, governance, and validation into pipelines as structural features rather than afterthoughts. Then close the loop, connect model performance signals back to data quality processes, so the system keeps improving its own.

That’s the real definition of AI-ready data: not a clean dataset handed off once, but an infrastructure that earns trust every time it runs. Build that foundation, and your models will have everything they need to deliver on the promise AI holds.